Jul 17, 2025·7 min

Internationalized email addresses: what breaks in production

Internationalized email addresses can fail at signup due to IDNs, Unicode normalization, and SMTPUTF8 gaps. Learn safe validation steps.

Why valid-looking emails break in production

An email address can look normal and still fail the moment it hits a real system. The biggest reason is that what people see as one character might be stored as different bytes, handled differently by libraries, or rejected by older mail servers.

A common example is something like søren@exämple.com. It can be a legitimate address, but it touches several fragile points at once: Unicode in the local part, an internationalized domain name, and mail infrastructure that may or may not support it.

Breaks also show up far away from your signup form. You might accept the address, then fail to send a password reset because your email provider (or an intermediate relay) doesn’t support SMTPUTF8. Or your regex accepts it, but your "lowercase and trim" step changes it into something different from what the user intended.

False rejections are expensive because they’re often silent. People abandon signups, retry with a different email, or open support tickets that are hard to reproduce. If you have a global audience, rejecting internationalized email addresses can also look like you simply don’t support their language.

False accepts cost you in a different way. Bad addresses lead to bounces, lower deliverability, and messy lists. Some valid-looking inputs are deliberately harmful: disposable domains, role accounts used for abuse, or spam traps that damage sender reputation.

The goal isn’t "accept everything" or "block everything." It’s to accept real users while stopping obviously bad input, using checks that match how email actually works: separate display from storage, verify domain reachability (not just the @), and avoid aggressive transformations that quietly rewrite what the user typed.

What counts as an internationalized email address

An email address has two parts separated by @: the local part (before @) and the domain (after @). Internationalized email addresses can include Unicode characters in either part, and real-world support varies depending on which side uses Unicode.

The most common case is an internationalized domain name (IDN). The user sees a domain written in their script, like maria@bücher.example or li@例子.公司. Under the hood, many systems convert that domain to an ASCII form (often called punycode) so DNS lookups and MX checks work reliably.

The trickier case is Unicode in the local part, like jó[email protected] or ユーザー@domain.com. This falls under Email Address Internationalization (EAI) and is typically delivered using SMTPUTF8. Even if the address is valid by modern standards, some mail servers, older libraries, and downstream tools still reject it. That means you can accept it at signup and still fail later when you try to send.

A practical way to think about support today:

- Unicode domains are widely usable because they can be represented in ASCII for DNS.

- Unicode local parts are less consistently supported end to end (signup form, database, CRM, email provider, and mailbox all need to handle them).

- Mixed scripts and look-alike characters can increase fraud risk.

- Display and storage break when software assumes "one character equals one byte."

This is why a single yes/no regex isn’t enough. A regex can reject legitimate users (for example, by blocking non-ASCII outright), or accept addresses you can’t actually deliver to.

Better validation treats each side separately. For the domain, normalize and convert to ASCII for DNS checks. For the local part, you need a policy decision: do you support SMTPUTF8 fully, do you allow it with a warning, or do you block it because your email stack can’t reliably deliver?

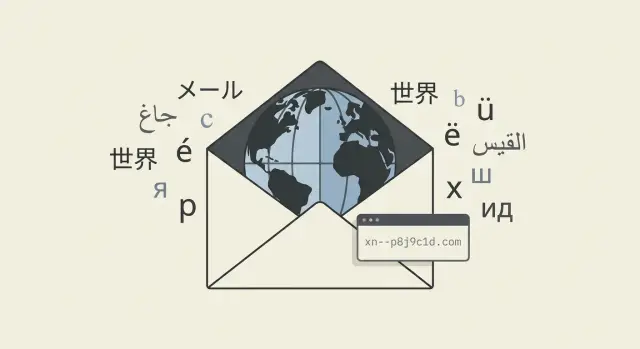

IDNs and punycode: the domain side of the problem

An IDN (Internationalized Domain Name) is a domain that uses non-ASCII characters, like accents or non-Latin scripts. People see and type the Unicode form, but DNS is built around ASCII. That mismatch is where many production bugs start.

To look up MX records, verify a domain, or even do a basic DNS check, you usually need the ASCII form of the domain. That conversion is done with IDNA rules and produces punycode, which often starts with xn--. So a user might enter a domain that looks normal to them, but your logs, database, or upstream provider might show an xn--... version instead.

Punycode shows up in places you should expect and handle:

- DNS and MX lookups

- API calls to validation or deliverability services

- Logs and security tools (so analysts can copy and paste safely)

- Storage and deduping (so the same domain isn’t stored twice in different forms)

Two common mistakes:

- Validating only the Unicode domain as a string and never converting it before DNS checks.

- Rejecting any domain with non-ASCII characters, which blocks legitimate users.

There’s also a security angle. Mixed-script and look-alike characters can be used to create domains that visually resemble a trusted brand. You usually shouldn’t block all IDNs because of this, but it’s reasonable to treat them as higher risk in contexts like payments, password resets, or admin access.

A practical approach is to accept what the user types, then normalize internally:

- Accept the Unicode domain in the input field.

- Convert the domain to ASCII (punycode) for DNS and MX checks.

- Store both forms when possible: Unicode for display, ASCII for technical checks and consistent matching.

- Flag suspicious patterns (mixed scripts, confusables) for extra review or step-up verification.

Unicode normalization: why the same text is not always the same

Unicode makes it possible to type names and words from many languages, but it also means the "same" character can be represented in more than one way. If you compare strings by raw bytes, or store one form but later receive another, you can get duplicates, failed logins, and confusing "email already in use" errors.

A simple example is accented characters. The letter "é" can be a single code point (U+00E9), or two code points: "e" (U+0065) plus a combining accent (U+0301). They look identical to a person, but your database may treat them as different.

NFC vs NFKC (and why it matters)

Normalization converts text into a consistent form.

- NFC (Normalization Form C) composes characters when possible. It usually keeps what users intended while making equality checks reliable.

- NFKC goes further by converting compatibility characters into simpler equivalents (for example, some full-width forms). This can improve consistency, but it can also change meaning and interact badly with spoofing and confusables if you apply it blindly.

For email handling, NFC is often the safest default for "make these equal" comparisons.

Case handling: what to lowercase (and what not to)

Case rules differ between the two sides of an address:

- Domain part (right of

@): safe to treat as case-insensitive. Lowercasing here is standard. - Local part (left of

@): technically case-sensitive, even though many providers ignore case. Lowercasing it can merge two distinct addresses on some systems.

A practical approach: lowercase and normalize the domain; keep the local part as the user entered it, while also storing a canonical form for comparisons.

When to normalize (and when to preserve)

Normalize at the moments where consistency matters:

- On input: normalize to NFC before validation and before checking "already registered."

- On storage: keep the original string (for display and support) and a canonical form (for search and dedup).

- On comparison: compare using the canonical form, not raw input.

Even with a good validation service, your app still needs a clear normalization and case policy so legitimate users aren’t blocked by invisible character differences.

Where production systems usually fail

Stop email failures early

Validate real-world emails at signup with a single Verimail API call.

Most bugs with internationalized email addresses happen at the seams, where one system makes a different assumption than the next. The address looks fine to a user, but something in the chain changes it, rejects it, or treats two identical-looking values as different.

A common failure starts in the signup form. Frontend rules often allow only A-Z, 0-9, and a few symbols. Mobile keyboards insert smart punctuation, and autofill adds a trailing space you can’t see. Auto-lowercasing can also behave oddly outside basic ASCII.

On the backend, small cleanup steps can be destructive. Trimming is good, but removing "non-printable" characters can delete valid Unicode marks. Length limits are another quiet problem: internationalized domains can grow when converted to punycode, and you can hit a limit you didn’t expect.

Databases add their own traps. Collation and comparison rules decide whether two strings are equal. If one system stores a composed form and another later sends a decomposed form, uniqueness checks can fail. That can create duplicate accounts or block a user because your "already exists" query doesn’t match what was stored.

Problems show up most often in:

- Client-side validation and input widgets

- Server-side sanitation, trimming, and length limits

- Database collation and unique indexes

- Integrations (CRMs, analytics, support tools, log processors)

- Outbound email systems that don’t support SMTPUTF8

Outbound mail is where it becomes unavoidable. If your mail server or a downstream relay doesn’t support SMTPUTF8, it may refuse to send to a mailbox with non-ASCII characters in the local part. Users can sign up, but never receive a verification email.

A realistic failure chain looks like this: a user registers with a Unicode domain, your app stores it, your CRM normalizes it differently, and your email provider refuses SMTPUTF8. Now you have one user, two strings that don’t match, and a welcome email that never arrives.

Step-by-step: a safer validation workflow

A safer workflow starts by separating two goals: don’t block real people at signup, and store something you can compare, send to, and support later.

-

Preserve the user’s input. Keep the address exactly as typed, then remove only obvious surrounding whitespace. Avoid "helpful" edits like changing case, removing accents, deleting dots, or stripping plus-tags. Those changes can silently turn one address into another.

-

Do a real syntax check. Treat it as "is this structurally possible," not "will mail definitely work." Modern rules are wider than most regexes, especially when international characters are involved.

-

Decide on normalization and uniqueness. Store the raw address plus a canonical form (often NFC) for equality checks. If email is a unique key in your system, write down whether visually identical but differently encoded input should count as the same account.

-

Handle the domain correctly. Normalize the domain and convert it to ASCII (punycode) before any DNS work. Then verify the domain and check for MX records (and possibly fallback A/AAAA if your policy allows).

-

Make a policy for Unicode local parts. If your outbound provider can’t deliver SMTPUTF8 reliably, accept the signup only if you have a fallback plan (in-app verification, alternate contact method, or a clear warning before you promise email delivery).

Keep a small set of logs around validation outcomes so support can troubleshoot edge cases without guessing.

Validation vs deliverability: what you can prove

Handle EAI safely

Flag Unicode local-part risk so you can enforce the right signup policy.

People often say "email validation" when they mean "will my message reach a real person." That’s deliverability, and you can’t fully prove it at signup. With internationalized email addresses, it’s even easier to confuse "looks valid" with "will work everywhere."

Validation can still give strong signals before you store an address or send to it:

- Syntax is acceptable (including SMTPUTF8 rules when relevant)

- The domain exists and can be resolved

- MX records are present (or the domain is configured to receive mail)

- The address or domain looks risky (for example, disposable providers)

But validation can’t guarantee the inbox exists or will accept your message. Many providers block mailbox probing, accept mail for unknown users and then bounce later, or change policies without warning.

A safer approach is two-stage:

- Validate at signup to catch obvious problems, reduce typos, and prevent low-quality signups.

- Confirm ownership with an email verification step (a click-to-confirm message). That’s what proves the user can receive mail and controls the address.

For "high-risk" results, hard blocks often create false negatives. Gentle friction tends to work better: allow signup, require confirmation before key actions, or ask for an extra signal only when risk is high.

Common mistakes that block legitimate users

The fastest way to lose real signups is to treat anything outside plain ASCII as invalid. Many legitimate providers support internationalized domains, and some users genuinely rely on them.

Mistake 1: "ASCII only" rules that are too strict

A lot of production code still assumes email means A-Z, 0-9, dots, and a short list of symbols. That breaks for IDN domains, and it guarantees false rejections in global products.

Mistake 2: Lowercasing or normalizing the whole address

Lowercasing the domain is fine. Lowercasing the local part can be risky.

Normalization helps, but doing it blindly can surprise users. Normalize intentionally, keep the original input, and test how downstream systems behave.

Common "helpful" transformations that backfire:

- Trimming too aggressively (or deleting characters you think are invisible)

- Lowercasing the entire address

- Applying one normalization form everywhere without testing exports and integrations

- Auto-removing dots or plus-tags (provider-specific behavior)

Mistake 3: Showing punycode to users

Your backend may need punycode for DNS checks, but your UI should usually display the domain in the user’s script. Showing xn--... in confirmations or error messages often looks suspicious, even when the address is correct.

Mistake 4: Assuming Unicode local parts always work

Even if an address is valid by spec, not every mail server supports SMTPUTF8. If you accept Unicode local parts, be ready for deliverability differences across providers.

Mistake 5: Ignoring paste artifacts

Copy-paste introduces non-breaking spaces, zero-width characters, and stray whitespace around @. Users can’t see these issues, but your validation will.

Example: a user pastes name@пример.рф with a trailing space. If you validate before trimming, you reject it. If you over-sanitize, you may alter the local part.

Quick checks before you ship

Cut fake signups

Block disposable emails and known risky domains before they hit your database.

Internationalized email addresses can work end to end, but only if every layer agrees on what it will accept, store, and send. Before release, run checks that match production behavior, not just unit tests.

A practical pre-ship checklist

- Confirm the signup form accepts Unicode where you intend (domain, local part, or both). If you don’t support Unicode local parts, say so at the point of entry.

- Pick one normalization rule (often NFC) and apply it consistently in the browser, API, background jobs, and admin tools.

- Convert the domain to ASCII (punycode) before DNS work, and store a comparable form so lookups don’t drift.

- Make account uniqueness match login and lookup rules. Decide whether visually identical but differently encoded addresses should map to one user.

- Test outbound email on your real mail path (provider, relay, library) using examples with an IDN domain and a Unicode local part.

Checks people forget (until support tickets arrive)

Make sure logs and admin tools can display the user-friendly version without turning it into mojibake or breaking exports. Also confirm every system that touches the address can handle it: analytics events, CRM sync, webhook payloads, CSV exports, and data warehouse ingestion. Many failures happen on export, not on input.

A concrete test: run the same address through signup, login, password reset, and any account-merge flow. If it passes signup but fails later, you have a mismatch in normalization, storage, or comparisons.

Example scenario and next steps

A user signs up with marta@bücher.example. It looks normal because the domain is written in Unicode. Your system needs to treat the domain as an IDN.

On the domain side, keep the user-friendly display but validate the DNS-safe form. Convert bücher.example to punycode (xn--bcher-kva.example) before you do MX lookups. If you only check the Unicode form, some DNS libraries will fail or behave inconsistently.

Now the subtle part: the same email can be typed in multiple ways that look identical. Suppose the user enters márta@bücher.example, but the "á" can be composed as a single character or as "a" plus a combining accent. If you store raw input only, a user can end up with two accounts that look the same in your UI.

Normalization fixes this. Normalize to NFC before you compare, store, rate-limit, or dedupe, and apply the same steps everywhere the address is touched.

The local part (everything before @) is where you need a clear policy. SMTPUTF8 allows Unicode local parts, but not every provider supports it end to end. A practical policy for many products is:

- Accept ASCII local parts by default.

- If the local part contains Unicode, accept it but require confirmation or show a clear warning.

- If your product depends heavily on email delivery (password resets, invoices), block Unicode local parts unless you’ve tested SMTPUTF8 support across your entire mail stack.

If you want a single place to run syntax checks plus domain and MX verification (and screen disposable providers), an email validation API like Verimail (verimail.co) can provide those signals without relying on a brittle regex.

Next steps that keep you safe without rejecting real users:

- Write down your policy for Unicode local parts (accept, warn, or block) and keep it consistent across web, mobile, and admin tools.

- Normalize to NFC before storing, comparing, or deduping.

- Test edge cases: IDN domains, mixed scripts, composed vs decomposed characters, and pasted input.

- Monitor outcomes: confirmation success rate, bounces, and "email already in use" errors after normalization.

- Keep enough logs around validation decisions to debug false rejections quickly.

FAQ

Why isn’t a single email regex enough for production?

A regex can only judge the shape of the text, not whether the domain can receive mail or whether your mail stack can deliver to that address. Regexes also tend to be either too strict, blocking valid internationalized domains, or too loose, accepting inputs that will bounce or fail later.

Should I allow Unicode characters in email addresses at signup?

If you have a global audience, allowing Unicode in the domain part is usually safe because it can be converted to an ASCII form for DNS checks. Unicode in the local part is a bigger decision because you must confirm your entire delivery path supports SMTPUTF8, otherwise you risk signups that can’t receive verification or resets.

How do I handle IDN domains and punycode correctly?

Keep what the user typed for display, but convert the domain to its ASCII form before doing DNS or MX lookups. Storing the ASCII form alongside the original helps you dedupe consistently and avoids mismatches across libraries that handle IDNs differently.

Which Unicode normalization should I use for emails?

Normalize early, ideally before you validate, compare, or check uniqueness, so visually identical inputs don’t create separate accounts. NFC is a practical default because it reduces “same-looking but different bytes” issues without rewriting characters as aggressively as compatibility normalization.

Is it safe to lowercase an email address?

Lowercase the domain because domain names are case-insensitive in practice and this prevents duplicates. Avoid lowercasing the local part by default because it can change meaning on systems that treat it as case-sensitive, and it can also create surprises with non-ASCII case rules.

How should I store emails to avoid duplicates and “already in use” bugs?

Store both the original input and a canonical form you use for comparisons, search, and unique constraints. The canonical form is typically trimmed of surrounding whitespace, normalized to NFC, and has the domain lowercased and converted to ASCII when needed, while the display form stays user-friendly.

What domain checks actually help before I send email?

Do a syntax check first, then verify the domain can be resolved and check for MX records using the ASCII version of the domain. This catches typos and dead domains early, but it still doesn’t prove a specific inbox exists, so you should combine it with email ownership confirmation.

Why does an email pass signup but fail for password resets later?

It often fails because the outbound path rejects it, not because your app accepted it. A common cause is lack of SMTPUTF8 support somewhere between your app, your email provider, and downstream relays, which can break delivery for Unicode local parts even when the address is valid.

How do I deal with copy-paste artifacts like invisible spaces?

Trim obvious surrounding whitespace, but be careful with “cleaning” that removes characters you can’t see, because some may be meaningful in Unicode. It also helps to detect and reject or warn on hidden characters like zero-width spaces so users can correct the input instead of being silently blocked.

How can Verimail help validate internationalized emails without breaking signups?

Use it to replace brittle, inconsistent validation logic with a single API call that checks syntax in an RFC-compliant way, verifies the domain, looks up MX records, and flags risky inputs like disposable email domains. It’s especially useful when you want fast, consistent decisions during signup without building and maintaining your own multi-stage pipeline.